Data reduction or compression techniques are important because universal laws put a premium on information. You couldn’t read all the books in the world, neither could you store them. You might make a start on reading every book by making it a team effort. In other words, you might tackle the problem with more than one brain and one pair of eyes. In communication theory terms, this approach is known as increasing the channel capacity by broadening the bandwidth. But you wouldn’t have an infinite number of people at your disposal unless you had an infinite amount of money to pay them! Likewise no one has an infinite channel capacity or an infinite bandwidth at their disposal. The similar argument applies to storage. Stated axiomatically: information, in all its forms, is using up valuable resources, so the more efficiently we can send it and store it the better. That’s where compression comes in.

If I say to you, “Wow, I had a bad night, the baby cried from three ’til six!” You understand perfectly what I mean because you know what a baby crying sounds like. I might alternatively have said, “Wow, I had a bad night, the baby did this; wah, bwah, bwah, wah …” and continue doing it for 3 h. Try it. You’ll find you lose a lot of friends because nobody needs to have it demonstrated. Most of the 3-h impersonation is superfluous. The second message is said to have a high level of redundancy in the terms of communication theory. The trick performed by any compression system is sorting out the necessary information content—sometimes called the entropy—from the redundancy. (If, like me, you find it difficult to comprehend the use of entropy in this context consider this: entropy refers here to a lack of pattern; to disorder. Everything in a signal that has a pattern is, by definition, predictable and therefore redundant. Only those parts of the signal that possess no pattern are unpredictable and therefore represent necessary information.)

All compression techniques may be divided between lossless systems and lossy systems. Lossless compression makes use of efficiency gains in the manner in which data are coded. All that is required to recover original data exactly is a decoder that implements the reverse process performed by the coder. Such a system does not confuse entropy for redundancy and hence dispense with important information. However, neither does the lossless coder perfectly divide entropy from redundancy. A good deal of redundancy remains and a lossless system is therefore only capable of relatively small compression gains. Lossy compression techniques attempt a more complete distinction between entropy and redundancy by relying on knowledge of the predictive powers of the human perceptual systems. This explains why these systems are referred to as implementing perceptual coding techniques. Unfortunately, not only are these systems inherently more complicated, they are also more likely to get things wrong and produce artifacts.

Lossless Compression

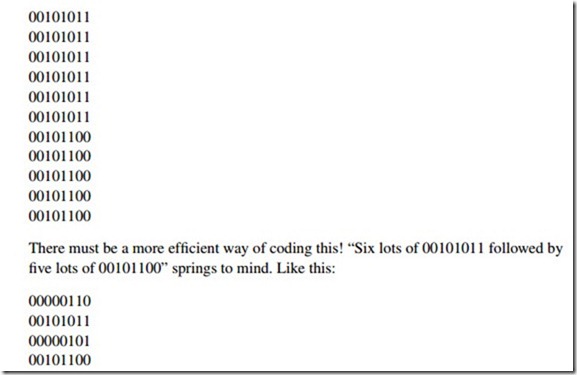

Consider the following contiguous stream of luminance bytes taken from a bit-map graphic:

This is the essence of a compression technique known as run-length encoding (RLE). RLE works really well but it has a problem. If a data file is composed of data that are predominantly nonrepetitive, RLE actually makes the file bigger! So RLE must be made adaptive so that it is only applied to strings of similar data (where redundancy is high); when the coder detects continuously changing data (where entropy is high), it simply reverts back to sending the bytes in an uncompressed form. Evidently it also has to insert a small information overhead to instruct the decoder when it is (and isn’t) applying the compression algorithm.

Another lossless compression technique is known as Huffman coding and is suitable for use with signals in which sample values appear with a known statistical frequency. The analogy with Morse code is frequently drawn, in which letters that appear frequently are allocated simple patterns and letters that appear rarely are allocated more complex patterns. A similar technique, known by the splendid name of the Lempel-Ziv-Welch (LZW) algorithm, is based on the coding of repeated data chains or patterns. A bit like

Huffman’s coding, LZW sets up a table of common patterns and codes specific instances of patterns in terms of “pointers,” which refer to much longer sequences in the table. The algorithm doesn’t use a predefined set of patterns but instead builds up a table of patterns that it “sees” from incoming data. LZW is a very effective technique—even better than RLE. However, for the really high compression ratios, made necessary by the transmission and storage of high-quality audio down low bandwidth links, different approaches are required, based on an understanding of human perception processes.

In principle, the engineering problem presented by low-data rates, and therefore in reduced digital resolution, is no different to the age-old analogue problems of reduced dynamic range. In analogue systems, noise reduction systems (either complementary, i.e., involving encoding and complementary decoding such as Dolby B and dbx1, or single ended) have been used for many years to enhance the dynamic range of inherently noisy transmission systems such as analogue tape. All of these analogue systems rely on a method called “compansion,” a word derived from the contraction of compression and expansion. The dynamic range is deliberately reduced (compressed) in the recording stage processing and recovered (expanded) in the playback electronics. In some systems this compansion acts over the whole frequency range (dbx is one such type). Others work over a selected frequency range (Dolby A, B, C, and SR). We shall see that the principle of compansion applies in just the same way to digital systems of data reduction. Furthermore, the distinction made between systems that act across the whole audio frequency spectrum and those that act selectively on ranges of frequencies (subbands) is true too of digital implementations. However, digital systems have carried the principle of subband working to a sophistication undreamed of in analogue implementations.